kaggle 竞赛 Classify Leaves 赛后记录

参赛时间:2021/6/20 - 2021/6/25

提交次数:37

最终成绩:57/165

00 前言

这次比赛是在 Bilibili 上看到李沐老师开的 动手学深度学习系列教程,想来白嫖一本签名书的(结果名额放到前20名都没能拿到),真是非常可惜。第一次写竞赛记录,希望能够在赛后完善自己的分析流程,并学习他人的方案进行反思。

然后是广告时间:本次竞赛使用的是自搭的 inicls)完成的,如README所说:

“这是用来学习和竞赛的一个图像分类框架,目标是基于图像分类任务以及 PyTorch 深度学习框架对图像分类的一些模块进行实验,对做科研的同学提供一个可供参考的分类任务的 pipeline。并尝试集成很多的 trick,希望能够对打算参加竞赛的同学也有一些帮助。iniclassification 希望成为一个较为全能的框架,在优化器、学习率调度、网络结构等方面都提供较为丰富的选择。”

借鉴了 mmlab/mmcls 的部分代码,使用 mmcv 为核心参考 config 和 register 的实现,并缝合上我认为比较好的tricks,目前 trick 部分还乏善可陈,也感谢这次比赛,能够让我一周内把这个框架在不断迭代先达到一个可用的状态。

01 EDA

其实我在赛前并没有做很详细的 EDA,只是大概了解了下类别不平衡现象比较严重,下面借鉴以下的 Notebook 重现一下EDA Leaf Classification|EDA|Model

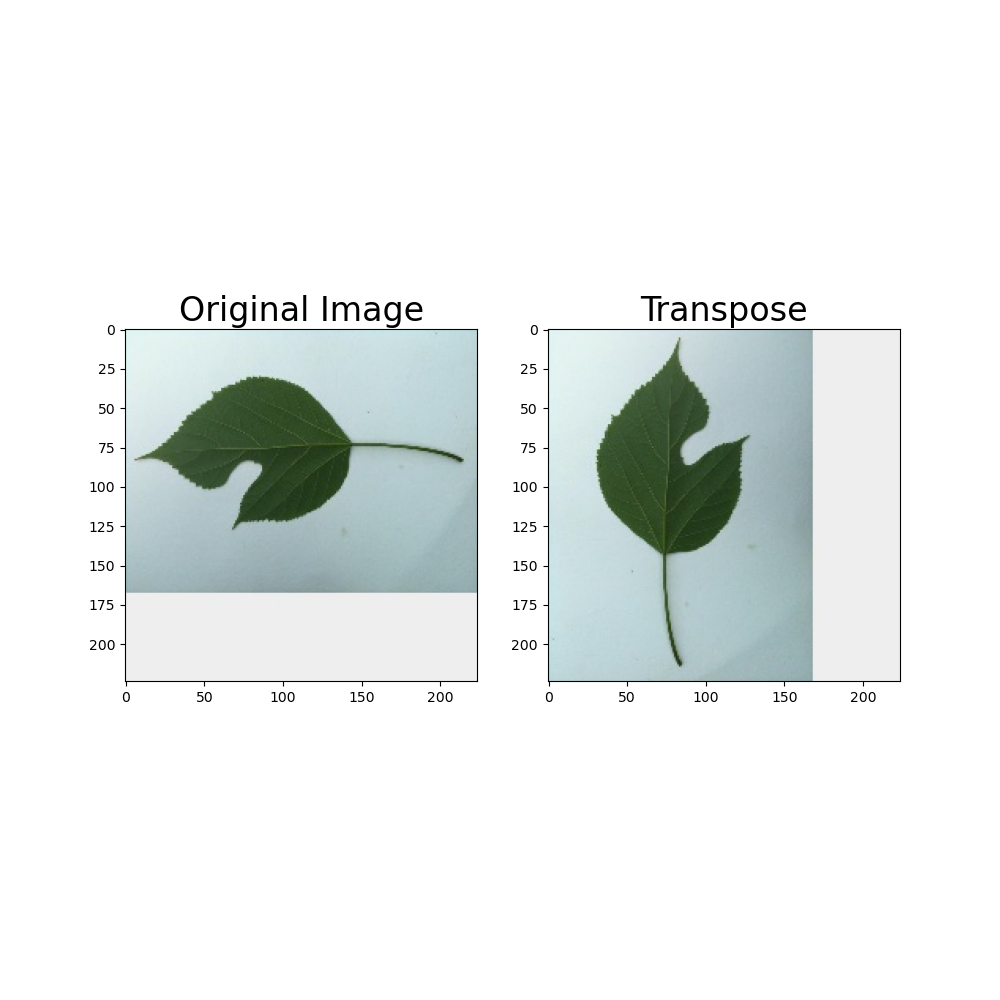

(1)数据增强可视化

(2)类别不均衡可视化

myplot3

02 模型选择

2-1 框架使用介绍

本次比赛主要是为了将自己的 inicls 库一个初步可用的状态,并测试 timm 模型库中的各个模型的性能,所以我按照如下设置进行了我的配置

model = 'resnest50d'

pretrained = True

num_classes = 176

train_ratio = 0.8

batch_size = 64

num_workers = 8

root = '/home/muyun99/data/dataset/competition_data/kaggle_classify_leaves/dataset'

dataset_type = 'competition_base_dataset'

img_norm_cfg = dict(

mean=[123.675, 116.28, 103.53], std=[58.395, 57.12, 57.375], to_rgb=True)

train_pipeline = [

dict(type='RandomCrop', size=224, padding=4),

dict(type='RandomFlip', flip_prob=0.5, direction='horizontal'),

dict(type='RandomFlip', flip_prob=0.5, direction='vertical'),

dict(

type='Normalize',

mean=[123.675, 116.28, 103.53],

std=[58.395, 57.12, 57.375],

to_rgb=True),

dict(type='ImageToTensor', keys=['img']),

dict(type='ToTensor', keys=['gt_label']),

dict(type='Collect', keys=['img', 'gt_label'])

]

valid_pipeline = [

dict(

type='Normalize',

mean=[123.675, 116.28, 103.53],

std=[58.395, 57.12, 57.375],

to_rgb=True),

dict(type='ImageToTensor', keys=['img']),

dict(type='ToTensor', keys=['gt_label']),

dict(type='Collect', keys=['img', 'gt_label'])

]

test_pipeline = [

dict(type='Resize', size=(256, -1)),

dict(type='CenterCrop', crop_size=224),

dict(

type='Normalize',

mean=[123.675, 116.28, 103.53],

std=[58.395, 57.12, 57.375],

to_rgb=True),

dict(type='ImageToTensor', keys=['img']),

dict(type='Collect', keys=['img'])

]

data = dict(

samples_per_gpu=16,

workers_per_gpu=2,

train=dict(

type='competition_base_dataset',

data_prefix=

'/home/muyun99/data/dataset/competition_data/kaggle_classify_leaves/dataset',

pipeline=[

dict(type='RandomCrop', size=224, padding=4),

dict(type='RandomFlip', flip_prob=0.5, direction='horizontal'),

dict(type='RandomFlip', flip_prob=0.5, direction='vertical'),

dict(

type='Normalize',

mean=[123.675, 116.28, 103.53],

std=[58.395, 57.12, 57.375],

to_rgb=True),

dict(type='ImageToTensor', keys=['img']),

dict(type='ToTensor', keys=['gt_label']),

dict(type='Collect', keys=['img', 'gt_label'])

],

ann_file='train_fold0.csv',

test_mode=False),

val=dict(

type='competition_base_dataset',

data_prefix=

'/home/muyun99/data/dataset/competition_data/kaggle_classify_leaves/dataset',

pipeline=[

dict(

type='Normalize',

mean=[123.675, 116.28, 103.53],

std=[58.395, 57.12, 57.375],

to_rgb=True),

dict(type='ImageToTensor', keys=['img']),

dict(type='ToTensor', keys=['gt_label']),

dict(type='Collect', keys=['img', 'gt_label'])

],

ann_file='valid_fold0.csv',

test_mode=False),

test=dict(

type='competition_base_dataset',

data_prefix=

'/home/muyun99/data/dataset/competition_data/kaggle_classify_leaves/dataset',

pipeline=[

dict(type='Resize', size=(256, -1)),

dict(type='CenterCrop', crop_size=224),

dict(

type='Normalize',

mean=[123.675, 116.28, 103.53],

std=[58.395, 57.12, 57.375],

to_rgb=True),

dict(type='ImageToTensor', keys=['img']),

dict(type='Collect', keys=['img'])

],

ann_file='test.csv',

test_mode=True))

optimizer = dict(type='SGD', lr=0.1)

loss = dict(type='CrossEntropyLoss')

lr_scheduler = dict(type='MultiStepLR', milestones=[40, 45], gamma=0.1)

max_epoch = 50

load_from = None

resume_from = None

workflow = [('train', 1)]

work_dir = './work_dirs/resnet18_b16x8_kaggle_leaves_tag_resnest50d_fold0'

random_seed = 2021

cudnn_benchmark = True

fold = 1

config = 'config/resnet/resnet18_b16x8_kaggle_leaves.py'

tag = 'resnest50d_fold0'

model_dir = './work_dirs/resnet18_b16x8_kaggle_leaves_tag_resnest50d_fold0/models'

tensorboard_dir = './work_dirs/resnet18_b16x8_kaggle_leaves_tag_resnest50d_fold0/tensorboard'

log_path = './work_dirs/resnet18_b16x8_kaggle_leaves_tag_resnest50d_fold0/logs/run.txt'

data_path = './work_dirs/resnet18_b16x8_kaggle_leaves_tag_resnest50d_fold0/data/data.json'

model_path = './work_dirs/resnet18_b16x8_kaggle_leaves_tag_resnest50d_fold0/models/best.pth'

valid_submission_path = './work_dirs/resnet18_b16x8_kaggle_leaves_tag_resnest50d_fold0/valid_submission_resnest50d_fold0.csv'

test_submission_path = './work_dirs/resnet18_b16x8_kaggle_leaves_tag_resnest50d_fold0/test_submission_resnest50d_fold0.csv'这个框架借鉴了很多 mmcls 的方案,然后train函数按照我的习惯会进行一些微调,最终能够依赖这一个config文件即可进行训练:

python train.py configresnet/resnet18_b16x8_kaggle_leaves.py --tag resnet18如果我想换网络,直接修改 config.model 即可:

python train.py configresnet/resnet18_b16x8_kaggle_leaves.py --tag resnet18 --options "model=resnet18"

python train.py config/resnet/resnet18_b16x8_kaggle_leaves.py --tag resnet101 --options "model=resnet101"

python train.py config/resnet/resnet18_b16x8_kaggle_leaves.py --tag resnext101_32x8d --options "model=resnext101_32x8d"

python train.py config/resnet/resnet18_b16x8_kaggle_leaves.py --tag resnest101e --options "model=resnest101e"如果想跑多折训练,就提前将 train 和valid csv 分好,也是传入即可

python train.py config/resnet/resnet18_b16x8_kaggle_leaves.py --tag seresnext50_32x4d_fold0 --options "model=resnest50d" "data.train.ann_file=train_fold0.csv" "data.val.ann_file=valid_fold0.csv"

python train.py config/resnet/resnet18_b16x8_kaggle_leaves.py --tag seresnext50_32x4d_fold1 --options "model=resnest50d" "data.train.ann_file=train_fold1.csv" "data.val.ann_file=valid_fold1.csv"

python train.py config/resnet/resnet18_b16x8_kaggle_leaves.py --tag seresnext50_32x4d_fold2 --options "model=resnest50d" "data.train.ann_file=train_fold2.csv" "data.val.ann_file=valid_fold2.csv"

python train.py config/resnet/resnet18_b16x8_kaggle_leaves.py --tag seresnext50_32x4d_fold3 --options "model=resnest50d" "data.train.ann_file=train_fold3.csv" "data.val.ann_file=valid_fold3.csv"

python train.py config/resnet/resnet18_b16x8_kaggle_leaves.py --tag seresnext50_32x4d_fold4 --options "model=resnest50d" "data.train.ann_file=train_fold4.csv" "data.val.ann_file=valid_fold4.csv"

更换优化器等部件同理,并且利用这个特性,我将众多的优化器都测试了一遍,可惜的是由于学习率的原因,测试结果并没有太大的说服力。下次加上以下特性会再进行测试

- 添加学习率 warmup:避免训练初始状态大学习率破坏预训练权重

- 添加 lr finder:有助于找到最初的学习率设置,避免学习率过大

```bash

python train.py config/resnet/resnet18_b16x8_cifar10.py --tag A2GradExp --options "optimizer.type=A2GradExp"

python train.py config/resnet/resnet18_b16x8_cifar10.py --tag A2GradInc --options "optimizer.type=A2GradInc"

python train.py config/resnet/resnet18_b16x8_cifar10.py --tag A2GradUni --options "optimizer.type=A2GradUni"2-2 模型测试

在测试了众多模型后,得出了如下的本地分数,我将本地分数0.97以上的模型的结果交到A榜上去,颇有些大力出奇迹的味道:

2-3 TTA 模型测试

发现并不如人意,我就添加了之前常用的测试时增强(TTA)的暴力trick,添加了水平和垂直翻转,再重新提交看本地分数和 A 榜成绩,如下:

2-4 多折模型测试

2-5 Ensemble 模型测试

03 赛后方案学习

详细的赛后方案学习请参照我的Wiki:kaggle-Classify Leaves 竞赛方案学习。通过对大家方案的学习,发现这次翻车的原因大概率是因为学习率的问题

- 一是没有设置warmup,导致初始阶段 0.1 的学习率会破坏预训练模型的知识

- 二是初始学习率过大,无法收敛到局部最优,预计之后会使用 lr finder 找到合适的学习率,或者直接从 0.001 开始

04 总结

4-1 赛后总结

本次竞赛基本是对之前简单竞赛的 Baseline 在 inicls 框架上做一个重现,下面是结论以及本次竞赛的经验教训

- 跑了很多模型,发现 resnext_101和 se_resnext50 是比较鲁棒的,基本可以作为 baseline 来调其他参数

- 在调参的时候一定注意顺序,先调学习率和优化器,这两个决定了模型的性能上限

- 一定要做交叉验证,可以先做三折,这是得到鲁棒本地分数的关键

- 然后在验证阶段,可以先各种 trick 往上堆看看效果,例如改loss(label smooth等)、改数据增强、

- 机器在网格搜索 trick 有效性的同时,对数据做进一步的分析,如类别不平衡,标签噪声

- 对结果做进一步分析,某一类的识别率会不会特别差,分析原因

- TTA 和 Ensemble 可以放在最后做,或者是为了和人组队先把分提上去可以先做一版,但是不要花太多精力,因为一般来讲都会涨点,所以不用浪费时间验证其有效性

4-2 预计 Trick 添加

- https://github.com/ildoonet/pytorch-randaugment

- https://github.com/kakaobrain/fast-autoaugment

- https://github.com/TylerYep/torchinfo

- https://github.com/Fangyh09/pytorch-receptive-field